David Kilpatrick wrote:

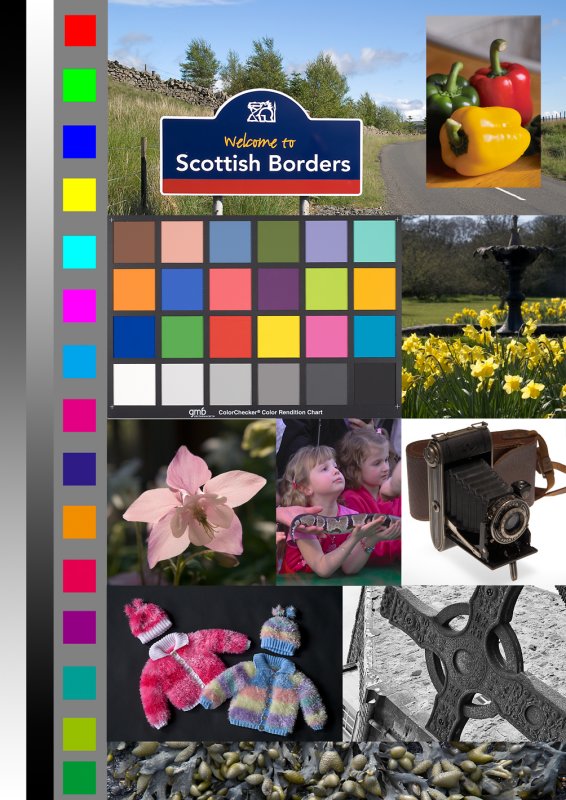

No, the RGB blocks are primaries - full yellow, full magenta, etc - straight mixes.

Depending on your management of the sRGB source, they will open with slightly adjusted values not 50/100 etc.

I assume that you are referring to the color blocks in a vertical column at the left of the image. In sRGB mode, the readouts of the top six of those blocks on my system are almost perfect - the greatest variation from theory of any component is only 0.4 %, and most are spot on. As I go further down the column the variation from what I think I should get becomes larger, but that may be a misunderstanding on my part of what to expect. (What values should I expect to see for the last ten blocks in that column on a properly calibrated monitor?)

What I was referring to in my message above was the readouts that I get from the colors in the Macbeth chart in your image, and I was wondering if a difference between the readouts of those blocks from the values published by X-Rite indicates an error in the calibration of my monitor. For example, in Block 16 - which is called "yellow" in the published table - the quoted values are R = 231, G = 199, B = 31. The values I measure on my screen from your image are R = 255, G = 224, B = 51.

Checking all of the color blocks in the Macbeth portion of your image against the published values, I get the following results:

Average variation: Red = 7.8 % Green = 16.1 % Blue = 14.2 %

Standard deviation: Red = 35.2 % Green = 8.4 % Blue = 19.5 %

I realize that I may misunderstand how your image should be interpreted, and the values in the Macbeth portion of your image may have nothing to do with a calibration error of my monitor. However, at the moment I am assuming that they do, and am fumbling around attempting to figure out what to do to insure that my monitor calibration is not going to introduce any errors on top of the ones that I make myself - which are plentiful enough that I need no additional issues.

With best wishes,

- Tom -